The landscape of mobile computing is undergoing a seismic shift, one that promises to finally fulfill the “post-PC” prophecy that has hovered over the tablet market for over a decade. For years, iPad news has been dominated by discussions of hardware supremacy—M-series chips that rival desktops and displays that outshine high-end televisions. However, the software has often lagged behind the silicon. That narrative is changing rapidly. With the recent confirmation that Apple has solidified a partnership with Google to integrate the Gemini model into Siri, the iPad is poised to become not just a canvas for creativity, but a hub of generative intelligence.

This strategic move, prioritizing Google’s established infrastructure over competitors like Anthropic, signals a new era for the Apple ecosystem. It is not merely a search engine update; it is a fundamental restructuring of how users interact with iPadOS. By leveraging Gemini, Siri is set to evolve from a command-response utility into a proactive, context-aware agent capable of complex reasoning. This article delves into the technical ramifications of this partnership, exploring how it transforms the iPad workflow, impacts the broader ecosystem from the Apple Vision Pro to the HomePod, and what it means for the future of personal computing privacy.

The Architecture of Intelligence: Siri Meets Gemini

To understand the magnitude of this update, we must look beyond the marketing buzz and analyze the technical architecture. Historically, Siri has relied on a mix of on-device processing and relatively simple server-side queries. The integration of Google’s Gemini model introduces a Large Language Model (LLM) capability directly into the system core. This hybrid approach aims to balance the raw power of cloud computing with Apple’s stringent privacy standards.

Hybrid Processing and Privacy

The integration utilizes a tiered processing system. For basic tasks—launching apps, setting timers, or local device control—Apple’s on-device neural engine handles the workload. This ensures that iOS security news remains positive regarding latency and data minimization. However, when a query requires synthesis, creative generation, or complex data analysis, the request is handed off to Gemini via Apple’s Private Cloud Compute infrastructure.

This distinction is critical. Unlike a standard browser query, where user data is often harvested, this integration reportedly anonymizes the request before it reaches Google’s servers. This allows Apple to maintain its stance on user privacy—a cornerstone of Apple privacy news—while accessing the reasoning capabilities of one of the world’s most advanced AI models.

Why Google and Not Anthropic?

Industry analysts have long speculated on who Apple would partner with. While Anthropic’s Claude models are celebrated for their nuance and safety, Google offers something distinct: an unparalleled knowledge graph and search integration. For the iPad user, this is vital. An iPad is often a research tool. By partnering with Google, Apple ensures that Siri doesn’t just “hallucinate” answers but grounds them in real-time information. This synergy suggests that future iOS updates news will focus heavily on citation and verification, features essential for the academic and professional markets that rely on the iPad Pro.

Transforming the iPad Workflow: Real-World Scenarios

The theoretical capabilities of LLMs are well documented, but how does this translate to the actual usage of an iPad? The introduction of a Gemini-powered Siri fundamentally alters the value proposition of the tablet form factor, particularly when paired with accessories.

The “Smart Script” Revolution with Apple Pencil

Apple Pencil news has recently focused on hardware features like haptic feedback and barrel roll. However, the software integration with Gemini takes this further. Imagine writing a rough outline for a marketing strategy in Apple Notes using the Apple Pencil. With the new integration, you can circle that outline and ask Siri to “flesh this out into a full proposal.” Siri, leveraging Gemini, reads your handwriting, understands the context, and generates a typed document below your handwritten notes, complete with formatting.

This extends to the Apple Pencil Vision Pro integration as well. As spatial computing evolves, the ability to use the Pencil as a precise input device while Siri handles the generative heavy lifting creates a workflow that feels almost telepathic.

Case Study: The Research Student

Consider a university student utilizing an iPad Air. Previously, their workflow involved split-screening Safari and a document editor, manually copying and pasting citations. With the new Siri:

- Input: The student highlights a PDF in the Files app and asks Siri, “Compare the arguments in this paper with the current geopolitical trends found in Google Search.”

- Process: Siri analyzes the local file (on-device/private cloud) and queries Gemini for current events (search integration).

- Output: A summarized comparison table is generated and inserted directly into a Freeform board or a Pages document.

This capability moves the iPad closer to the “vision board” concept often discussed in iPad vision board news, where the device becomes a dynamic surface for thought rather than just a static screen.

Ecosystem Ripple Effects: Beyond the Tablet

Apple’s strength lies in its ecosystem continuity. A major upgrade to Siri on iPadOS inevitably bleeds into other product lines, creating a unified experience. The integration of Gemini is not an island; it is a tide that lifts all boats, from the smallest accessories to the most advanced headsets.

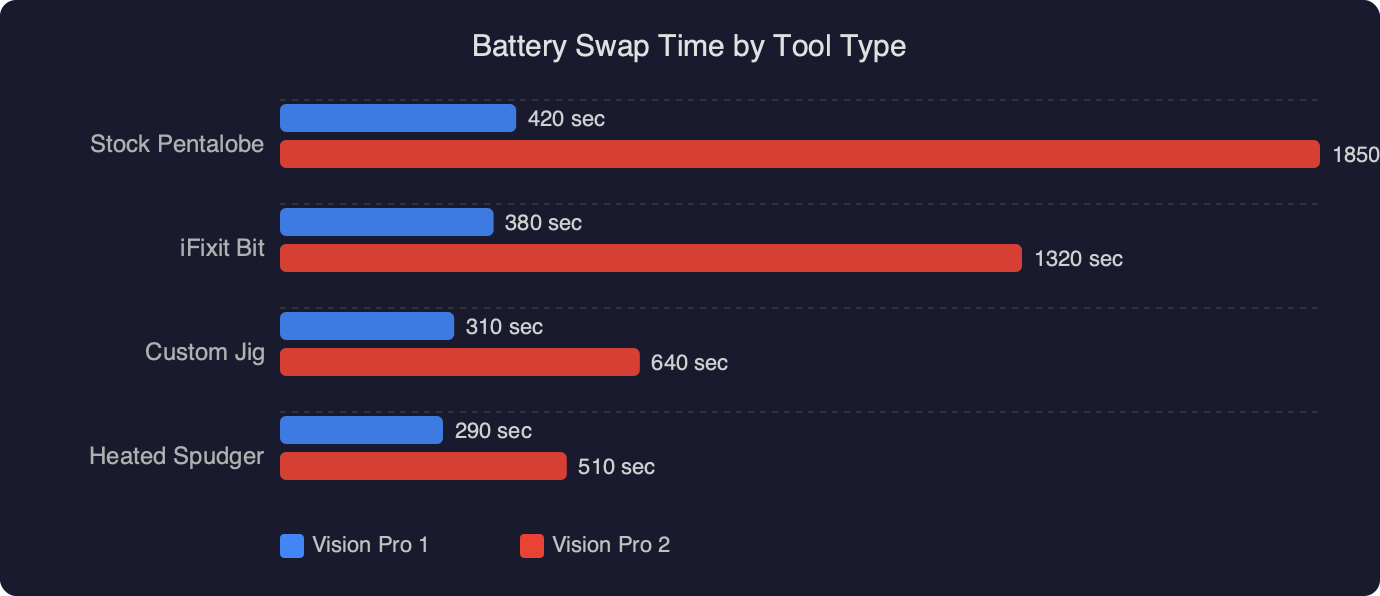

The Vision Pro and Spatial Computing

Apple Vision Pro news has been dominated by discussions of immersive media, but the productivity angle relies heavily on voice interaction. Typing in mid-air is cumbersome. A Gemini-backed Siri transforms the Vision Pro from a media consumption device into a legitimate workstation. Users can verbally dictate complex coding structures or email responses that Siri parses and perfects. Furthermore, rumors surrounding Vision Pro wand news suggest that future controllers might include dedicated AI-trigger buttons, allowing for multimodal input (pointing at an object and asking Siri to identify or modify it).

The Smart Home Hub

The iPad often serves as a home controller, but the intelligence has been limited. With this update, HomePod news and HomePod mini news get a significant boost. Because the processing logic is shared across the Apple ID, a request made to a HomePod can leverage the same Gemini backend. This means you could ask your HomePod, “Plan a dinner menu based on the dietary restrictions I saved in my iPad Notes,” and it will execute the task. This level of cross-device context awareness is a game-changer for Apple ecosystem news.

Wearables and Health

In the realm of Apple Watch news and Apple health news, the implications are profound. While the Watch itself may not run the full Gemini model, it acts as an input terminal. A user could describe a set of symptoms to their Watch. Siri, processing this via the paired iPhone or iPad, could cross-reference this with health data stored in the Health app (securely) and provide a summary for a doctor. This moves health tracking from passive data collection to active health intelligence.

Hardware Requirements and the Legacy Divide

With great power comes great system requirements. One of the most contentious aspects of this transition will be the hardware cutoff. Running even a distilled version of Gemini, or managing the secure handshake with the cloud, requires significant NPU (Neural Processing Unit) performance.

The M-Series Exclusivity

It is highly probable that the full suite of these features will be restricted to iPads running M1 chips and later. This creates a fragmentation in the user base. While iPhone news often highlights the annual upgrade cycle, iPad users tend to keep their devices for 4-5 years. Owners of older iPad Pros with A-series chips may find themselves locked out of the AI revolution, relegated to the “classic” Siri experience.

Nostalgia and the Simplicity of the Past

This technological leap creates a stark contrast with Apple’s history. There is a growing subculture driving iPod revival news, where users yearn for devices that don’t think for them. The complexity of a Gemini-powered iPad stands in opposition to the single-purpose utility celebrated in iPod Classic news or iPod Mini news.

Interestingly, we are seeing a resurgence in interest for non-connected devices. iPod Nano news and iPod Shuffle news forums are active with users modifying these devices to escape the “always-on” AI monitoring. Even iPod Touch news—regarding a device that was essentially a proto-iPhone—is viewed through a lens of nostalgia for a simpler iOS era. As the iPad becomes a hyper-intelligent assistant, the market for “dumb” technology (like a modded iPod Video covered in iPod news) may actually grow as a form of digital detox.

Accessories and Peripherals in the AI Era

The physical interaction with the iPad is also evolving. Apple accessories news is no longer just about protective cases; it’s about enabling AI workflows.

Audio Intelligence

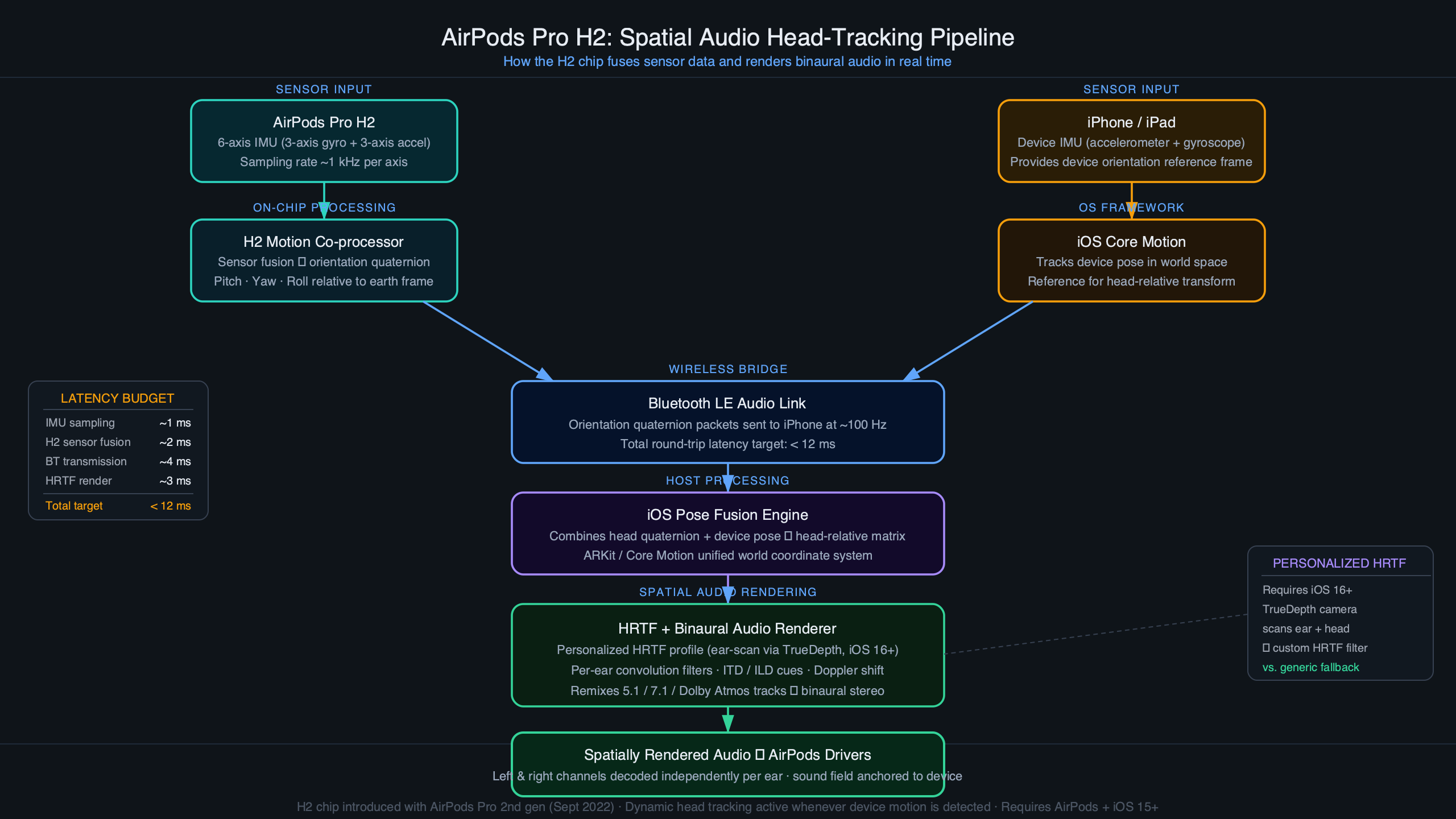

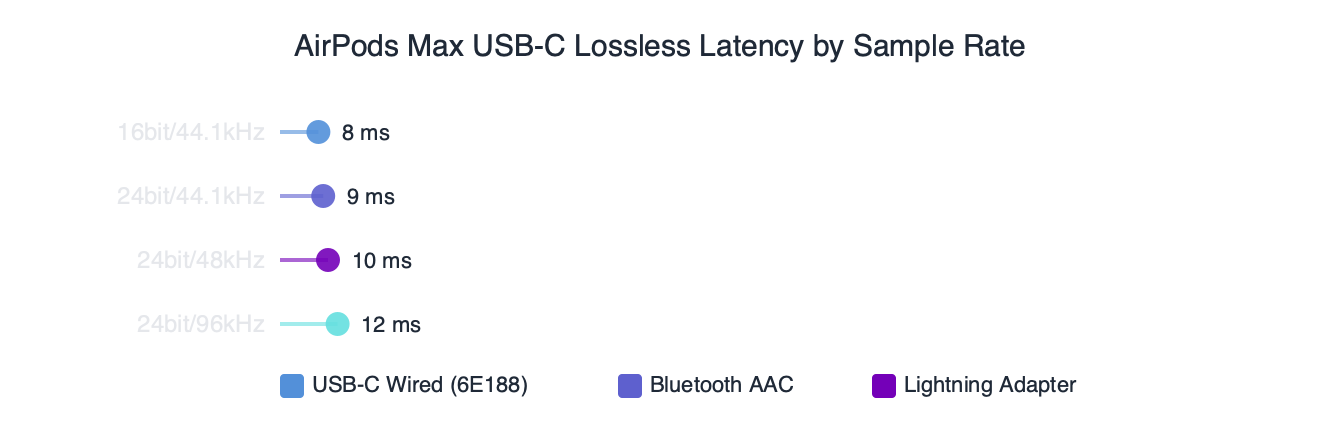

The role of headphones is shifting. AirPods Pro news suggests that future firmware updates will allow for better conversation awareness, which Siri can process. Imagine wearing AirPods and attending a lecture; the iPad records the audio, and Siri (via Gemini) transcribes and summarizes it in real-time, whispering key points back to you if requested. AirPods Max news indicates that the high-fidelity audio could be used for AI-driven soundscape generation, aiding focus.

Even the standard AirPods news cycle is discussing how voice isolation tech helps Siri understand commands in crowded environments, a necessity for mobile AI use.

Tracking and Context

AirTag news has generally focused on finding lost keys. However, in an AI context, AirTags provide location context. “Siri, did I leave my bag at the office?” requires the AI to understand “bag” (linked to AirTag), “office” (location history), and the time delta. The Gemini integration allows for natural language queries regarding the location of items, making the Find My network more accessible.

The Living Room Battleground

Finally, Apple TV news and Apple TV marketing news are shifting focus. The Apple TV is becoming the visual interface for the smart home’s brain. With Siri/Gemini, you could ask your TV, “Show me movies that feel like ‘Inception’ but are shorter than two hours,” and get a curated list with reasoning. This moves beyond metadata matching to semantic understanding of content.

Challenges and Considerations

Despite the optimism, there are hurdles. Apple AR news frequently highlights the difficulty of overlaying data on the real world without clutter. Similarly, AI text generation can suffer from “bloat.” Users need concise answers, not essays.

Furthermore, Vision Pro accessories news highlights the cost of entry. To fully utilize this ecosystem—iPad Pro, Vision Pro, AirPods Pro—the investment is massive. There is a risk that the “best” version of Siri becomes a luxury product, creating a digital divide within the Apple user base.

Conclusion

The integration of Google’s Gemini into Siri marks a pivotal moment in iPad news. It represents the maturation of the tablet from a media consumption slate into a cognitive partner. By combining Apple’s hardware excellence and privacy framework with Google’s data infrastructure, the iPad is set to offer a computing experience that is both powerful and personal.

While this advancement casts a shadow over older hardware and contrasts sharply with the simplicity celebrated in iPod news, it is the necessary step forward to keep the platform relevant. As we look toward the release of these features next year, the question is no longer “What can an iPad do?” but rather “What can’t it figure out?” For developers, creatives, and students, the smart iPad is finally arriving, bringing the entire Apple ecosystem along for the ride.