Tim Cook is doing that thing again.

You know the pattern. We all saw it happen in 2014 before the Watch, and again in 2023 before the Vision Pro dropped. Tim Cook starts making these vague, philosophical comments about a technology “inevitably” becoming part of our daily lives.

And he’s doing it right now with “Visual Intelligence.”

If you caught his latest interview comments—or the chatter leading up to the March 4 event—you might have noticed the subtle shift in vocabulary. For the last two years, it was all about “Spatial Computing.” Now? The focus is drifting toward AI wearables that see what you see. Actually, let me back up—it’s about time.

I’ve been using the Vision Pro since launch day back in ’24. I love the thing for movies. I tolerate it for work. But let’s be real: nobody wants to wear a 600-gram computer on their face while walking down the street to grab a coffee. That was never the endgame.

The bridge to actual smart glasses

The industry has been waiting for Apple to shrink the tech down. We wanted “Apple Glass” immediately, but physics had other plans. What’s interesting about the current rumors is that Apple seems to be acknowledging that full AR isn’t necessary for the next step. They are pivoting to AI as the killer feature, rather than 3D digital overlays.

Think about it. The Meta Ray-Bans proved people are okay with cameras on their faces if the form factor is normal. I wear mine almost daily just to capture quick videos or ask the AI what I’m looking at. Apple’s “Visual Intelligence” seems to be their answer to that, but integrated deeply into the ecosystem we’re already trapped in.

But I was digging through the logs in the visionOS 3.1 update from January, and there are already hooks for this. The system is getting much better at identifying objects in the periphery without active user intent. That’s battery-draining on a headset, but on a dedicated low-power wearable? Probably that’s the product.

It’s not about the screen anymore

Here is where I think people are getting it wrong. Everyone is expecting the next device to be a cheaper Vision Pro—maybe a “Vision Air” with lower resolution screens. Sure, that might happen. But the real money is on a device with no screens.

If the rumors hold up, Apple is looking at a wearable that relies entirely on audio and visual input processing. Visual Intelligence isn’t about displaying a window in space; it’s about the computer understanding your environment.

I tested a similar workflow last week using a custom shortcut on my iPhone 17 Pro. I pointed the camera at a broken espresso machine (my beloved Breville, RIP) and asked the AI to diagnose the leak based on the steam pattern. It worked in seconds. But now, imagine that without holding a phone. That’s the utility gap Cook is hinting at.

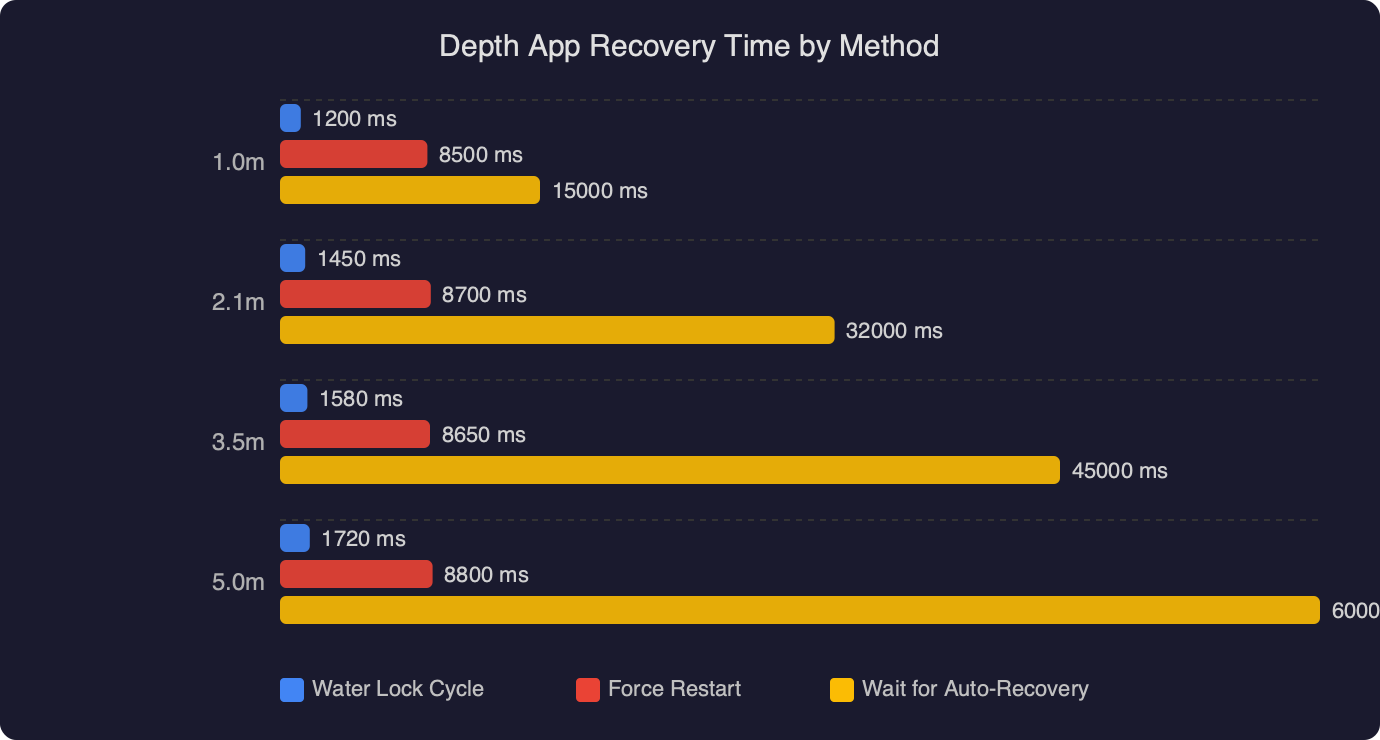

The technical hurdle: Latency and Heat

Running multimodal AI models in real-time is heavy. My M4 MacBook Air still spins up the fans when I run local LLMs for image analysis. For a wearable to do this, Apple needs to offload the compute effectively or make the neural engine incredibly efficient — and I suspect the “Visual Intelligence” push relies heavily on the tethering architecture they perfected with the Watch. Your iPhone is the brain; the glasses are just the eyes and ears. This solves the heat problem that plagued the early Vision Pro prototypes.

What to expect in March

We’re looking at a March 4 event that is ostensibly about Macs—likely the low-end MacBook refresh we’ve been hearing about. But Apple loves to use these “minor” events to set the stage for software. And I wouldn’t be shocked if we get a preview of the next generation of Siri capabilities that specifically leverage visual context.

They need to train us to talk to our computers about what we see. It feels unnatural at first. I felt ridiculous the first time I asked my Vision Pro to “identify this plant,” but now it’s second nature. Cook’s hints suggest they want to normalize this behavior before they drop the hardware that relies on it.

My skepticism remains

But I have to throw a wet blanket on the hype train for a second. Apple’s history with Siri suggests that “intelligence” is a strong word. Even with the Apple Intelligence rollout over the last year, I still get hallucinations. Just yesterday, the summarization feature told me a grim email from my accountant was “good news.”

If “Visual Intelligence” misinterprets a stop sign or a medication label, the stakes are higher. Wearing a camera that feeds data into a model requires a level of trust I’m not sure the tech giants have earned yet.

Plus, privacy. Always privacy. If Apple releases a wearable that is constantly scanning the environment to offer “intelligence,” the “opt-in” screens are going to be a nightmare of legalese.

The long game

The Vision Pro was the dev kit. It was the heavy, expensive proof of concept. The “AI Wearable” is the consumer product.

Tim Cook knows the iPhone era is plateauing. We aren’t buying new phones every year anymore. I’m certainly not—my upgrade cycle is now three years, minimum. They need a new category. By focusing on “Visual Intelligence,” they are trying to make the physical world clickable.

But if they pull it off, we might finally stop staring at black rectangles in our hands. If they fail, it’s just another gadget that ends up in the drawer next to my iPad 3.

I’ll be watching the March event closely. Not for the MacBooks—I don’t need one—but for the adjectives. Listen to how they describe the camera. That’s where the future is hiding.