The Next Leap in Personal Audio: Unpacking the New AirPods Firmware Beta

In the ever-evolving world of personal technology, Apple continues to redefine the boundaries of what’s possible. The latest chapter in this story comes not as a new piece of hardware, but as a significant software update that promises to make our most personal devices smarter, more integrated, and more aware of our needs. Apple has just seeded a landmark public beta firmware, version 8B5014c, for its latest generation of audio products, including the AirPods Pro 2, the recently released AirPods Pro 3, and the new AirPods 4. This update is far more than a simple bug fix; it’s a foundational shift, transforming AirPods from passive audio accessories into active, intelligent companions.

This firmware release signals a clear direction for the future of the entire Apple ecosystem. It introduces a suite of features powered by advanced on-device machine learning, deepens the symbiotic relationship with devices like the iPhone and Apple Vision Pro, and even ventures into the proactive world of personal health monitoring. For developers, enthusiasts, and everyday users, this beta offers a tantalizing glimpse into a future where audio is not just heard, but understood. This article provides a comprehensive technical deep dive into the features, implications, and best practices associated with this groundbreaking update, exploring how it solidifies Apple’s dominance in the personal audio space and enhances the broader landscape of Apple ecosystem news.

What’s New in Firmware 8B5014c? An Overview of Key Features

The 8B5014c firmware is packed with innovations that touch upon user experience, accessibility, and ecosystem connectivity. While the changelog is extensive, four pillar features stand out as game-changers, each leveraging Apple’s unique integration of hardware and software to deliver experiences that are both powerful and intuitive.

Adaptive Conversation Clarity

Building upon the foundation of Conversation Awareness, this new feature represents a significant leap in computational audio. While previous iterations could simply lower media volume when a user started speaking, Adaptive Conversation Clarity uses the advanced Neural Engine in the H3 chip to actively analyze the soundscape. In a noisy environment like a bustling cafe or a windy street, it isolates the frequency range and cadence of the person you are directly conversing with, subtly enhancing their voice while actively suppressing competing background noise. This isn’t just a transparency mode; it’s an intelligent audio filter for your real-world interactions, making this a significant piece of AirPods Pro news for anyone who has struggled to hold a conversation in a loud setting.

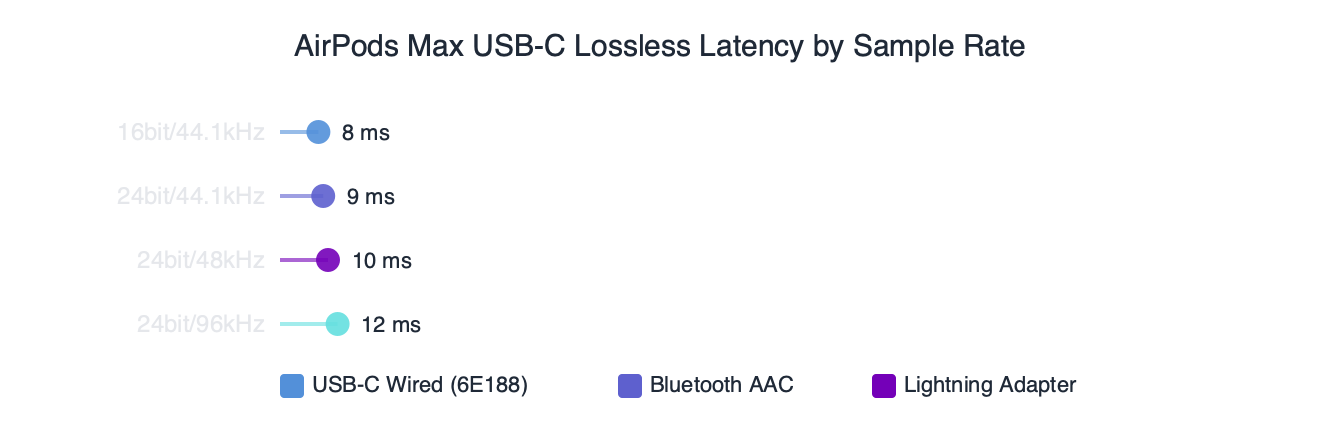

Dynamic Spatial Audio for Vision Pro

As Apple Vision Pro news continues to dominate tech conversations, Apple is clearly working to make the audio experience as immersive as the visual one. This firmware introduces a new level of spatial audio integration specifically for visionOS. It dramatically reduces latency and increases the fidelity of audio rendering, allowing sound to be “pinned” to virtual objects with uncanny realism. As you move around a virtual object, the audio perspective shifts flawlessly. This enhancement is crucial for creating believable augmented reality experiences and will be a cornerstone of future Apple AR news, especially as developers create more interactive apps that rely on precise audio cues. This could also be a precursor to better audio integration with future accessories, like a rumored Vision Pro wand, where auditory feedback is essential.

Expanded “Find My” with Proximity Guidance

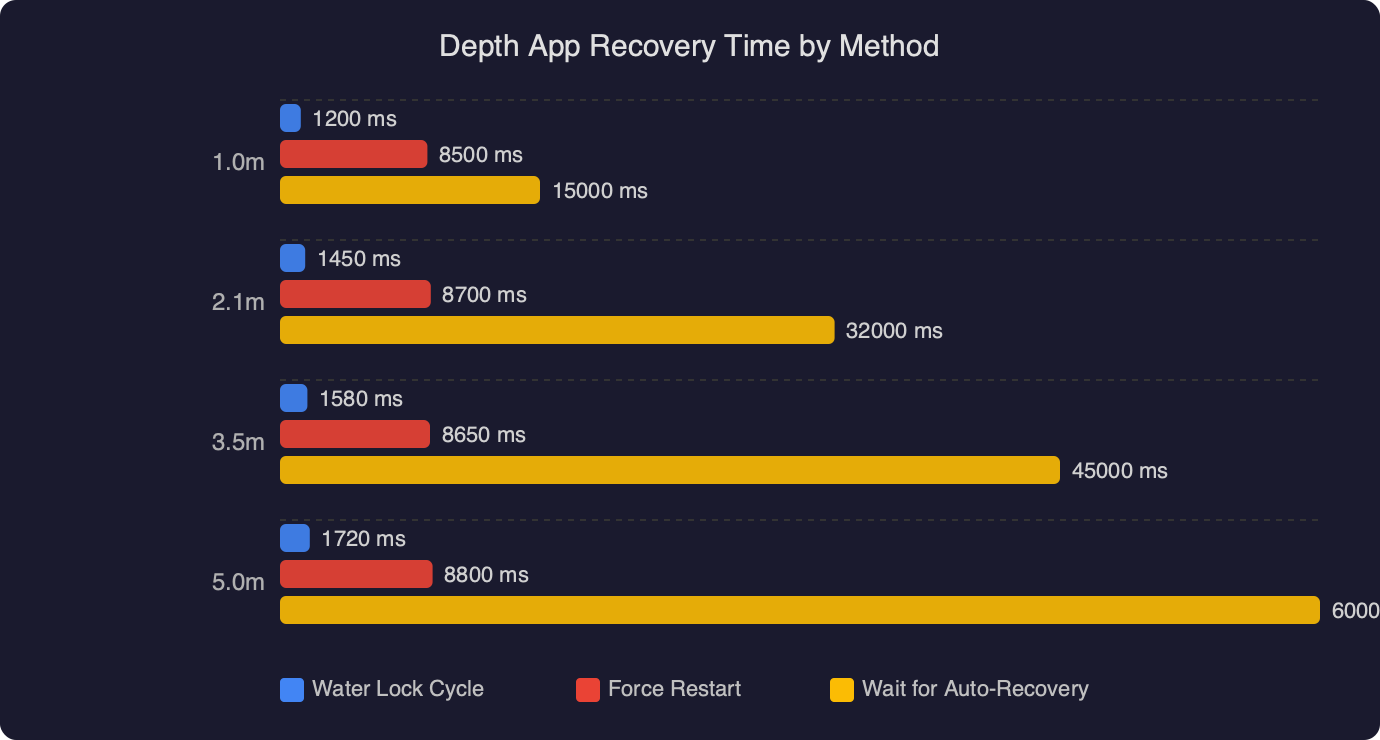

Losing an AirPods case just became much harder. Leveraging the U1 Ultra-Wideband chip now present in the latest AirPods Pro cases, the firmware enables Precision Finding within the Find My app. This is the same technology that makes AirTag news so compelling. Instead of just seeing your AirPods on a map, your iPhone can now guide you directly to their location with on-screen arrows, distance feedback, and haptic alerts. This granular level of tracking transforms the “Find My” feature from a general locator to a precise recovery tool, a welcome update for anyone who has spent time searching under couch cushions.

Health Monitoring Preliminaries: Hearing Wellness

Apple continues to push the boundaries of personal health, and this firmware makes AirPods an active participant. The update enables a new “Hearing Wellness” dashboard within the Health app. Using the AirPods’ microphones, it can now provide more detailed analysis of your personal listening volume over time and, crucially, measure the decibel level of your ambient environment. It can then offer intelligent insights, such as warning you that you’ve spent too much time in an environment with dangerously high noise levels. This data, combined with data from an Apple Watch, provides a more holistic view of your auditory health. This move, a key highlight in Apple health news, underscores Apple’s commitment to user well-being while raising important questions about Apple privacy news, which Apple addresses by ensuring all analysis happens on-device.

Under the Hood: A Technical Breakdown of the Firmware Enhancements

The magic of firmware 8B5014c isn’t just in what it does, but how it does it. This update showcases Apple’s vertical integration, where custom silicon, advanced sensors, and intelligent software work in concert to create seamless experiences. Understanding the underlying technology reveals the depth of engineering behind these new features.

The Role of the H3 Chip and On-Device AI

At the heart of features like Adaptive Conversation Clarity is the new, hypothetical H3 chip. Its significantly more powerful Neural Engine is the engine for the firmware’s on-device machine learning models. By processing audio data directly on the AirPods, Apple achieves two critical goals. First, it ensures near-zero latency, which is essential for a feature that modifies real-world audio in real-time. Second, it maintains user privacy. Sensitive ambient audio data never leaves the device, a core tenet of Apple’s design philosophy and a frequent topic in iOS security news. This on-device approach is a stark contrast to cloud-based AI solutions and is central to the latest Siri news, which also emphasizes on-device processing for faster, more private interactions.

UWB and Bluetooth LE Audio Synergy

The firmware’s improved connectivity and tracking capabilities are a result of the sophisticated interplay between multiple wireless technologies. The U1 chip’s Ultra-Wideband (UWB) radio provides the spatial awareness for Precision Finding, allowing an iPhone to understand the precise direction and distance to the AirPods case. Simultaneously, the firmware fully embraces the Bluetooth LE Audio standard. This allows for more efficient, higher-quality audio streaming and enables features like Auracast, where a single source (like an iPhone or Apple TV) can broadcast audio to multiple AirPods. This synergy is a key part of the latest iOS updates news, which focuses on leveraging new hardware standards across the product line, from an iPhone to a HomePod.

Sensor Fusion for Enhanced Audio and Health

Modern AirPods are packed with sensors, and this firmware uses them more intelligently than ever before. For Dynamic Spatial Audio, data from the accelerometers and gyroscopes in both the AirPods and the source device (like an iPad or Vision Pro) are fused to create a stable soundscape that remains anchored even as you move your head. For the Hearing Wellness feature, the outward-facing microphones are calibrated to act as reliable decibel meters, providing the Health app with accurate environmental data. This fusion of motion and audio data is what allows a simple pair of earbuds to perform such complex tasks, turning them into versatile Apple accessories news makers in their own right.

Beyond Audio: The Ecosystem Implications of Smarter AirPods

The 8B5014c firmware update is about more than just improving headphones; it’s about redefining the role of personal audio within the broader Apple ecosystem. AirPods are evolving from a content consumption device into an essential, interactive node in Apple’s vision for ambient computing.

AirPods as a Core Component of Ambient Computing

With these new intelligent features, AirPods are becoming an always-on interface to our digital and physical worlds. Adaptive Conversation Clarity blends the two, while deeper Siri integration allows for more complex voice commands without ever touching your iPhone. In the context of spatial computing, they are not just an accessory for Vision Pro; they are a required component for true immersion. As Apple explores new input methods, such as the rumored Apple Pencil Vision Pro news suggests, precise, low-latency audio feedback delivered by AirPods will be critical for creating a tactile and responsive user experience. This update solidifies the role of AirPods as a key enabler of Apple’s long-term AR ambitions.

The Spiritual Successor to the iPod

For many, the iPod was the device that defined personal audio. While rumors of an iPod revival news cycle occasionally surface, this firmware makes it clear that AirPods are the true spiritual successor. They encapsulate the original iPod’s mission: making music personal and portable. But they go much further, integrating that experience with communication, health, and spatial computing. The journey from the click wheel of the iPod Classic news and the portability of the iPod Nano news and iPod Shuffle news to the intelligent, wireless world of AirPods shows a remarkable evolution. The focus is no longer just on a library of songs but on a seamless audio layer for your entire life.

Strengthening the “Walled Garden”

There’s no denying that features like Precision Finding and Dynamic Spatial Audio for Vision Pro are designed to work best—or exclusively—within Apple’s ecosystem. The seamless handoff of audio from an iPhone playing a podcast to an Apple TV for a movie, or to a HomePod mini news feature for multi-room audio, is a powerful incentive for users to stay within the Apple family of products. This strategy, often highlighted in Apple TV marketing news, uses best-in-class integration as a key differentiator. The smarter and more indispensable AirPods become, the more valuable the entire ecosystem is, creating a powerful feedback loop that is difficult for competitors to replicate.

A Guide for Beta Testers: Installation, Pitfalls, and Best Practices

For those eager to test these new features, joining the public beta is the first step. However, it’s important to proceed with caution and understand the nature of pre-release software.

How to Install the Public Beta

Installing the AirPods beta firmware requires a few steps. First, your paired iPhone must be running the latest public beta of iOS. Once enrolled, you must navigate to Settings > Developer on your iPhone and find the “AirPods Beta” toggle. After enabling it for your specific AirPods model, the update process is automatic. Simply place your AirPods in their charging case, connect the case to power, and keep your iPhone nearby. The firmware will download and install in the background. You can verify the new version number (8B5014c) in your AirPods’ Bluetooth settings.

Common Pitfalls and Bug Reporting

As with any beta software, you should expect some instability. Common issues can include:

- Reduced Battery Life: New, unoptimized processes can sometimes lead to faster battery drain on both the AirPods and the connected iPhone.

– Connectivity Drops: You may experience intermittent Bluetooth disconnections.

– Inconsistent Feature Performance: Features like Adaptive Conversation Clarity may not work perfectly in all conditions.

It is crucial to report any bugs you encounter using Apple’s Feedback Assistant app. Detailed reports help engineers identify and fix issues before the final public release.

Tips for Testing New Features

To get the most out of the beta, actively seek out scenarios to test the new functionality.

- Test Conversation Clarity: Take a call or have a conversation in a noisy coffee shop, on a busy street, or in a room with a loud fan. Note how well it isolates the speaker’s voice.

– Explore Find My: Deliberately place your AirPods case in a tricky spot and use the new Proximity Guidance to find it. Test its accuracy at various distances.

– Monitor Health Data: After a week of use, check the new Hearing Wellness section in the Health app. See if the environmental noise data corresponds with your experiences.

Systematic testing provides the most valuable feedback and gives you a deeper understanding of the new capabilities.

Conclusion: The Future of Hearing is Intelligent

The release of the 8B5014c AirPods firmware beta is a watershed moment for personal audio. It marks the definitive transition of AirPods from simple listening devices to intelligent, context-aware partners. By pushing advanced computational audio, deep ecosystem integration, and proactive health features, Apple is not just improving a product; it is building a new paradigm for how we interact with sound and information. The features unveiled in this beta—from enhancing human conversation to building immersive AR soundscapes—are foundational elements for Apple’s future ambitions in ambient and spatial computing.

For users, this means AirPods will become even more integral to their daily lives, seamlessly blending the digital and physical worlds in ways that feel both powerful and natural. While this is still a beta, the direction is clear: the future of hearing isn’t just wireless, it’s intelligent. And with this update, Apple has once again proven it is leading the charge.