The landscape of spatial computing is undergoing a significant transformation. Since its inception, the Apple Vision Pro has relied heavily on a controller-free philosophy, utilizing advanced eye-tracking and hand gestures to navigate the operating system. However, as the platform matures from a consumption device into a professional creative powerhouse, the demand for precision input has grown exponentially. Recent developments in the third-party accessory market, specifically regarding high-precision styluses, have sparked a new wave of Apple Vision Pro news.

While Apple has historically kept its proprietary input methods close to the chest—evident in the exclusive integration of the Apple Pencil with the iPad—the emergence of supported third-party “wands” or styluses for the Vision Pro marks a pivotal shift. This article delves deep into the technical specifications, user experience, and ecosystem implications of using a stylus in a 3D environment. We will explore how this hardware bridges the gap between traditional digital art and spatial computing, effectively creating a new category of Apple Pencil Vision Pro news.

The Shift from Hand Tracking to Precision Instruments

To understand the significance of a stylus for the Vision Pro, one must first look at the evolution of Apple’s input devices. The journey began with the click wheel, a staple of iPod Classic news, and evolved into the multi-touch interface that dominates iPhone news today. Each input method was tailored to the medium. For the Vision Pro, the “look and pinch” method is excellent for navigation but lacks the tactile feedback and sub-millimeter precision required for professional 3D modeling, medical imaging, or detailed illustration.

Bridging the Gap with Logitech’s MX Ink Technology

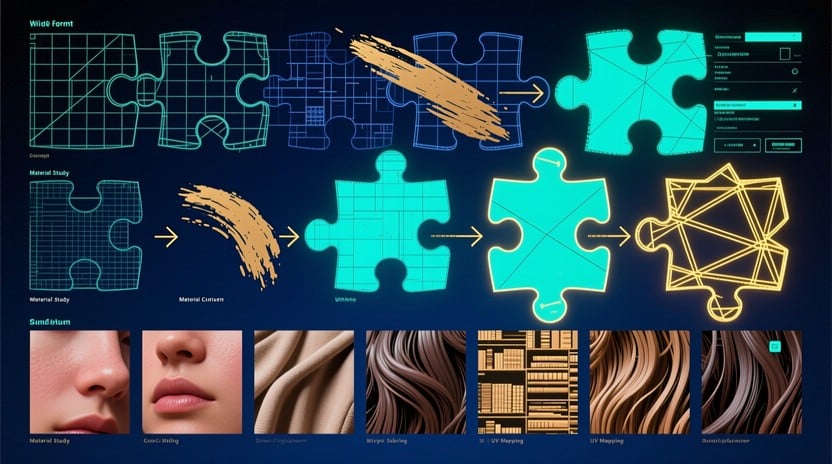

The introduction of devices like the Logitech MX Ink represents the first serious foray into Vision Pro wand news. Unlike a standard stylus that interacts with a 2D digitizer, a spatial stylus must operate with Six Degrees of Freedom (6DoF). This means the device knows its position in X, Y, and Z coordinates, as well as its pitch, yaw, and roll.

This technology allows users to draw in mid-air, manipulating 3D objects as if they were physical sculptures. For artists accustomed to iPad news and the tactile resistance of glass, this requires a paradigm shift. The stylus supports switching between 2D surface drawing (using a physical mat or desk) and 3D aerial manipulation. This duality is critical for workflows that involve sketching a schematic on a virtual whiteboard and then pulling that schematic out into a volumetric 3D model.

Technical Specifications and Haptics

A key differentiator in this new wave of Apple accessories news is the integration of haptic feedback. When drawing in the air, there is no physical resistance. To compensate, advanced actuators inside the stylus provide vibrations that mimic the sensation of texture or the “click” of locking an object in place. This is similar to the Taptic Engine discussed in Apple Watch news, but refined for the nuances of a writing instrument.

Furthermore, pressure sensitivity remains a requirement. Even when used on a physical surface, the stylus must transmit pressure data with zero latency to the Vision Pro. This is achieved through a proprietary wireless protocol that operates alongside Bluetooth, ensuring that the connection remains stable even in environments saturated with signals from AirPods Pro news or HomePod mini news sources.

Deep Dive: Integration with the Apple Ecosystem

The introduction of a stylus for Vision Pro is not an isolated event; it ripples across the entire Apple ecosystem. The interoperability between devices is what makes this development so compelling for power users.

From iPad to Vision Pro: A Seamless Workflow

Consider a scenario involving an architect. They might begin a draft using an Apple Pencil on an iPad Pro. With the new continuity features found in recent iOS updates news, they can effectively “hand off” this project to the Vision Pro. The stylus allows them to pick up exactly where they left off, but now they can extrude the floor plan into a 3D structure. This synergy is the holy grail of Apple ecosystem news—hardware that dissolves the boundaries between devices.

This also touches upon iPad vision board news. Creatives often use iPads to create mood boards. In visionOS, these boards become immersive environments. A stylus allows the user to pin, resize, and annotate these assets with a level of speed that hand gestures simply cannot match.

The Legacy of Input: A Historical Context

It is fascinating to trace this lineage back to earlier devices. The precision required here is lightyears ahead of the scroll wheels discussed in iPod Mini news or the simple button interfaces of iPod Shuffle news. Even the touch screens of the iPod Touch news era seem primitive compared to the spatial tracking algorithms required here. Yet, the philosophy remains the same: removing barriers between the user’s intent and the digital output.

Just as the iPod Nano news cycles eventually gave way to more capable devices, we are seeing the “finger-only” era of Vision Pro evolve into a “multimodal input” era. This evolution mirrors the trajectory of Apple TV news, which started as a simple hobbyist project and evolved into a central hub for home entertainment and Apple TV marketing news strategies.

Practical Applications and Real-World Scenarios

Who actually needs a stylus for a headset? The use cases are more diverse than one might initially think, extending far beyond digital painting.

Case Study 1: Medical Training and Surgery Planning

In the medical field, precision is non-negotiable. Surgeons using Vision Pro for pre-operative planning can use a stylus to simulate incisions or trace nerve pathways on a 3D MRI scan. The stylus acts as a scalpel proxy. This intersects with Apple health news, where the focus is increasingly on proactive health management and professional medical tools. The ability to rotate a virtual heart and annotate specific valves with a pen offers a learning retention rate significantly higher than 2D textbook study.

Case Study 2: Automotive Design

Automotive designers have traditionally used clay models. With Apple AR news and a spatial stylus, they can carve virtual clay. The stylus allows for sweeping curves and precise detailing that hand gestures might misinterpret. They can walk around the full-scale model of a car, adjusting the aerodynamics with a flick of the wrist. This is a massive leap for Vision Pro accessories news, moving the headset from a viewing device to a manufacturing tool.

Case Study 3: Audio Engineering in 3D Space

While AirPods Max news often focuses on sound quality, the spatial audio capabilities of the Vision Pro allow engineers to mix sound in 3D space. A stylus can be used to grab audio nodes and place them precisely in the room—dragging a drum track to the back left corner or pulling a vocal track front and center. This visual-spatial interaction with sound is a novel concept that leverages the precision of the new input hardware.

Implications for Privacy and Security

With new hardware comes new data. A stylus that tracks fine motor skills generates biometric data. Apple privacy news and iOS security news are critical here. Users need assurance that the telemetry data from the stylus—which could theoretically be used to analyze behavioral patterns or even diagnose neurological conditions—remains processed on-device. Just as Siri news often revolves around on-device processing to protect user intent, the spatial coordinates of a stylus must be encrypted to prevent “motion fingerprinting.”

Furthermore, unlike AirTag news, where the location is broadcast to the Find My network, the stylus location data is strictly local to the headset’s field of view. This distinction is vital for enterprise clients worried about corporate espionage through peripheral data leaks.

Pros and Cons of Spatial Stylus Adoption

While the technology is impressive, it is not without its challenges. Below is a detailed breakdown of the advantages and drawbacks of integrating a stylus into the Vision Pro workflow.

The Advantages

- Precision: Offers sub-millimeter accuracy that hand tracking cannot achieve due to occlusion or lighting conditions.

- Ergonomics: Holding a pen is a familiar ergonomic posture for millions of professionals, reducing the fatigue associated with holding arms up for gesture control.

- Tactile Feedback: Physical buttons on the stylus allow for immediate mode switching (e.g., from draw to erase) without navigating software menus.

- Legacy Support: It bridges the gap for users transitioning from Wacom tablets or iPads, making the learning curve for spatial computing less steep.

The Challenges

- Battery Management: Another device to charge. Unlike the iPod revival news nostalgia where devices lasted weeks, active styluses require frequent charging, often via USB-C or proprietary docks.

- Cost: These are premium accessories. The price point creates a barrier to entry, much like the premium pricing discussed in AirPods news.

- Loss and Storage: The Vision Pro does not have a magnetic latch for a stylus. Unlike the iPad, you have to carry it separately. This brings up concerns similar to AirTag news—how do you keep track of expensive, small accessories?

- Software Adoption: The hardware is only as good as the software. Developers need to update their apps to support 6DoF input, which takes time.

Future Outlook: Will Apple Make Their Own?

The release of the Logitech MX Ink has fueled speculation regarding Apple Pencil news. Will Apple release a first-party “Apple Pencil Pro Vision”? Historically, Apple allows third parties to test the waters (like early game controllers for iOS) before releasing their own polished version.

If Apple does enter the market, we can expect deep integration with HomePod news (perhaps for voice-controlled tool switching) and potentially haptic feedback that rivals the best gaming controllers. There is also the possibility of integration with the Apple Watch news ecosystem, where the watch could act as a secondary palette while the hand holds the pencil.

We may also see a resurgence of interest in older form factors. The compact nature of the stylus reminds us of the portability praised in iPod Nano news. As components shrink, the technology inside these wands will become more powerful, potentially including their own processors to offload tracking data from the headset.

Best Practices for Early Adopters

For those looking to integrate a stylus into their Vision Pro setup immediately, consider the following tips:

- Lighting Matters: Just as Apple AR news emphasizes the need for good lighting for world tracking, the optical sensors on the stylus (or the headset tracking the stylus) require a well-lit environment to maintain 6DoF accuracy.

- Surface Calibration: If drawing on a physical desk, ensure the surface is non-reflective. Glass desks can confuse the tracking sensors, a common pitfall in optical tracking.

- Customize Controls: Use the companion app to map the physical buttons to your most-used tools. This customization is key to speed, similar to setting up shortcuts in macOS.

- Ergonomics: Even with a stylus, take breaks. Spatial computing engages different muscle groups than desk work.

Conclusion

The arrival of stylus support for the Apple Vision Pro is more than just a new accessory launch; it is a maturation of the platform. It signals that spatial computing is ready to move beyond passive consumption and into the realm of high-fidelity creation. By combining the intuitive nature of hand tracking with the precision of a dedicated instrument, the ecosystem is opening doors for architects, designers, and surgeons to work in ways previously thought impossible.

From the humble beginnings of the click wheel in iPod news to the sophisticated spatial tracking of today, Apple’s journey has always been about refining the connection between human and machine. Whether through a third-party solution like Logitech’s or a future first-party Apple Pencil, the “spatial wand” is set to become an indispensable tool in the arsenal of the modern creative professional. As software updates roll out and developers harness this new input method, the line between the physical and digital worlds will continue to blur, fulfilling the true promise of spatial computing.